The Infrastructure Gap

Across ThunderScan's analysis of production databases in over a thousand B2B software companies, a consistent failure pattern emerges: AI-powered features underperform not because the models are poorly trained, but because the underlying data infrastructure was never designed to support cognitive workloads. The embedding model is sound. The prompt engineering is competent. The retrieval pipeline, however, is querying a database that was architected for a fundamentally different purpose — and the mismatch produces outputs that are subtly, persistently wrong in ways that erode user trust without triggering any obvious error.

The root of this problem is structural. Relational databases — and the CRUD operational model they implement — were designed to store discrete facts and retrieve them on demand with exact precision. They are extraordinarily effective at this task. What they were not designed to do is support the kinds of queries that AI systems require: semantic similarity search, temporal reasoning, cross-session memory, graph traversal, and multimodal retrieval. These are not missing features that a configuration change can unlock. They represent a different computational paradigm entirely.

The core thesis of this paper: The transition from CRUD to cognition is not a model problem — it is a data architecture problem. Teams that recognize this distinction and address it structurally build AI features that work reliably at scale. Teams that do not spend months debugging infrastructure failures that present as model failures.

A Half-Century of Transactional Databases

To evaluate why databases are structurally misaligned with AI requirements, it is necessary to understand the design goals they were actually built to achieve.

In 1970, Edgar Codd published his relational model at IBM — a rigorous application of set theory and predicate logic to the problem of data management. The model's premise was elegant: organize data into normalized tables, enforce relationships through keys, and expose a declarative query interface. For the next three decades, every major enterprise system ran on this foundation, because the operational requirement was well-defined: store facts reliably, retrieve them exactly, update them atomically.

SQL, normalization, ACID transactions. The job: reliably record business events. Oracle, IBM DB2, and later PostgreSQL and MySQL become the backbone of every enterprise. CRUD is invented — and it is exactly what was needed.

The internet breaks relational assumptions. Google, Amazon, and Facebook need horizontal scale that SQL cannot provide at their velocity. NoSQL emerges — MongoDB, Cassandra, Redis, DynamoDB. The trade: consistency and structure for speed and scale. The world accepts "eventually consistent" as a reasonable bargain.

Reporting queries begin killing production databases. The industry separates OLTP from OLAP. Data warehouses — Redshift, BigQuery, Snowflake — take over analytics. Data pipelines grow complex. Two databases now do the work one used to do, and someone has to keep them in sync.

Graph databases for relationships. Time-series for IoT. Document stores for flexibility. Search engines for full-text. The "right tool for the job" philosophy spawns a 20-database production stack that no one fully understands.

AI arrives — and none of the above was designed for it. LLMs need semantic search. Agents need persistent memory. RAG pipelines need temporal context. Recommendation engines need graph traversal and vector similarity in the same query. The infrastructure wasn't built for cognition. It was built for storage.

Each architectural era addressed a genuine operational constraint. None was designed in anticipation of a system that must reason over data rather than merely retrieve it.

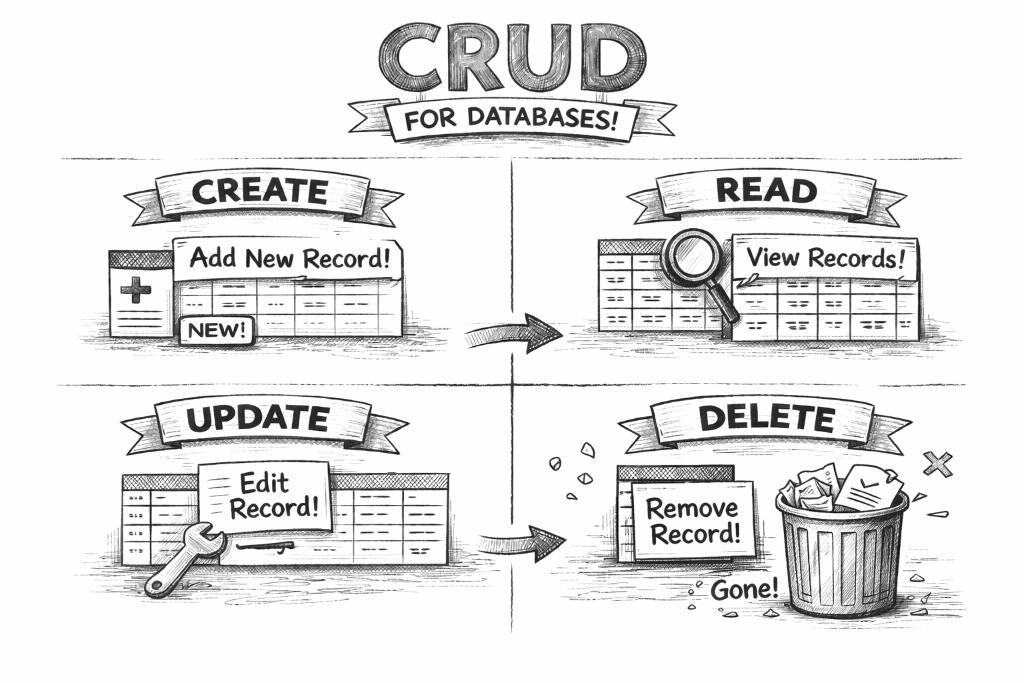

What Is CRUD?

A precise understanding of the CRUD model is necessary before analyzing its limitations in an AI context. CRUD — Create, Read, Update, Delete — defines the four canonical operations through which software systems interact with persistent data. The model has been the foundation of application development since the relational model was established in 1970, and it remains the dominant interaction pattern in production systems today.

The four CRUD operations — the complete vocabulary of traditional database interaction.

Each letter maps to a precisely defined database operation:

Insert a new record into the database. A user signs up → a new row is written to the users table. An order is placed → a new row appears in orders.

Retrieve existing records. A dashboard loads → a SELECT query fetches rows matching a condition. A search box fires → the database returns matching results.

Modify an existing record. A user changes their email → one row is updated. An order ships → its status field changes from 'pending' to 'shipped'.

Remove a record permanently. A user cancels their account → their row is deleted. A product is discontinued → it is removed from the catalog.

For fifty years, virtually every business application — from payroll systems to e-commerce platforms to CRM tools — was built on combinations of these four operations. The pattern's power lies in its precision: any interaction that can be expressed as a Create, Read, Update, or Delete will be executed by a relational database with sub-millisecond latency, full ACID compliance, and predictable behavior at scale.

Why CRUD Works So Well

CRUD's genius is its precision. Every operation has a deterministic, exact answer:

- Exact key lookup: "Get user with id=42" always returns exactly one row — or nothing.

- Atomic writes: Either the record is saved completely, or it isn't. No partial states.

- Predictable performance: With proper indexing, CRUD queries return in microseconds regardless of table size.

- ACID guarantees: Transactions are Atomic, Consistent, Isolated, and Durable — the bedrock of financial and business data integrity.

The CRUD model also maps directly to how organizations conceptualize business records: entities are created, queried, modified, and eventually removed. The model is intuitive, standardized, and teachable. SQL — the language implementing CRUD on relational systems — became the most widely deployed query language in computing history.

CRUD is not a limitation. It is a precisely engineered solution to a well-defined problem. The challenge is that the problem AI systems present is categorically different — and was never part of CRUD's design specification.

When AI systems are layered onto CRUD-optimized databases, they encounter a fundamental category error: the database is designed to answer "what is the current value of this field?" — not "what does this data mean in context?" That distinction is the analytical focus of everything that follows.

The Structural Mismatch

The databases underpinning most AI-powered features today were not designed with cognitive workloads in mind. They were designed to answer transactional queries:

- "What did user 42 order last Tuesday?"

- "How many active subscriptions do we have?"

- "Update the status of order 1,337 to 'shipped'."

These are CRUD queries. They are precise, deterministic, and well-served by any properly indexed relational database — executing in under 5 milliseconds with ACID guarantees at high concurrency. That performance is a genuine engineering achievement, not a shortcoming.

AI systems, however, require a fundamentally different query contract:

- "What does this user's behavior over the last six months suggest about their intent?"

- "Which documents in our knowledge base are semantically similar to this support ticket?"

- "Given everything this agent has learned across 200 conversations, what does it remember about this customer?"

A traditional database is extraordinarily good at retrieving the exact record you ask for. It has no concept of approximately relevant, contextually similar, or temporally significant. These are not missing features. They are fundamentally different modes of computation — and they require fundamentally different architectural thinking.

Organizations that recognize this architectural distinction early — and design deliberately around it — produce AI features that perform reliably in production. Those that layer AI directly onto an unreconstructed CRUD stack consistently encounter problems that present as model failures but are, on investigation, infrastructure failures.

The Five Cognitive Gaps

Through ThunderScan's analysis of production databases, five specific structural gaps have been identified that prevent a conventional CRUD database from supporting cognitive workloads. None are catastrophic in isolation. Together, they account for the majority of AI production failures — incidents that occur not during training, but at inference time, under real load, with real users.

Relational databases match data by exact value. AI needs to match by meaning. A support ticket that says "my account won't load" and a help article titled "login troubleshooting" share no words in common — but they are semantically related. Without a vector embedding layer alongside your structured data, your AI is working blind, limited to keyword coincidence rather than conceptual understanding.

Traditional databases are designed to reflect current state. They store what is true now — and overwrite the past. An AI agent, by contrast, learns from history. It needs to know not just that a customer is on Plan B today, but that they were on Plan A six months ago, downgraded after a billing dispute, re-engaged after a support call, and have been a net promoter since. That narrative is the intelligence. Most databases discard it silently with every UPDATE.

Foreign keys model relationships at one degree of separation. But intelligence — human or artificial — thinks in networks. "Who influenced this user's purchase?" requires traversing a graph of referrals, social connections, shared account activity, and communication history — a query that explodes into hundreds of self-joins in SQL, and is native in a graph database. AI-powered features like fraud detection, recommendation engines, and influence mapping all live here.

Most production databases have a created_at column. Very few have true bi-temporal modeling — the ability to distinguish between when something happened in the real world and when we recorded it in the database. For AI training, this difference is critical. A model trained on data that conflates transaction time with record time will learn subtly incorrect causal patterns — and produce subtly incorrect predictions that are nearly impossible to debug.

Real-world intelligence is multimodal. A customer's support email, their product screenshot, their voice note — these carry as much signal as any structured field. A CRUD database stores a file path and calls it done. A cognitive data layer can index the semantic content of that email, the visual embedding of that screenshot, and the sentiment trajectory of that voice note — making them first-class citizens of your data model, not orphaned blobs in an S3 bucket.

What AI Actually Needs From a Database

To be concrete about the requirements: when an AI-powered product feature is built — a recommendation engine, a support copilot, a document retrieval system, or an autonomous agent — the data infrastructure must support a query contract that CRUD was never designed to fulfill.

| What AI Needs | CRUD Database | Cognitive Layer Required |

|---|---|---|

| Semantic similarity search "Find content related to this query" |

❌ Only exact/LIKE matches | Vector index (HNSW/IVF) over embeddings |

| Long-term agent memory "What does this agent know about user X?" |

❌ No cross-session persistence concept | Memory store: vector + metadata + recency decay |

| Temporal event replay "What was true about user X in Q3 2024?" |

❌ Overwrites past state on UPDATE | Append-only event log + bi-temporal indexing |

| Graph traversal "Who are the 3-hop connections of this user?" |

❌ Self-joins become exponentially slow | Graph store (or recursive CTEs with LATERAL) |

| Hybrid retrieval "Docs similar to query AND from org_id=42" |

❌ Cannot filter by semantic relevance | Hybrid index: vector similarity + metadata filters |

| Feature freshness tracking "Are these embeddings still valid?" |

❌ No embedding staleness concept | content_hash + embedding_updated_at columns |

In practice, most engineering teams eventually construct an improvised layer between their model and their database — assembled from caching services, background jobs, and ad hoc scripts. Teams that architect this layer intentionally from the outset, treating it as a first-class system component, consistently produce AI features with significantly greater reliability and longevity.

The Cognitive Data Stack

The answer is not to throw away your relational database. It is to recognize it as one layer in a multi-layer cognitive stack — each layer purpose-built for a different kind of intelligence operation.

THE COGNITIVE DATA STACK · AI APPLICATION LAYER AT TOP

Notice what this stack does differently from a "just use Postgres" architecture: it separates concerns. The transactional layer does what it has always done brilliantly — ACID guarantees, referential integrity, business logic enforcement. The semantic layer adds the ability to query by meaning. The memory and temporal layer gives the AI a sense of history. The object layer stores raw artifacts. Each layer has one job.

How an AI Agent Actually Uses This Stack

When a support copilot answers a question, it isn't executing one query. It is orchestrating a retrieval pipeline across multiple layers in milliseconds:

Each of those queries targets a different layer of the cognitive stack. Removing any single layer — the absence of embeddings, missing event history, or no memory store — degrades the AI's output. The degradation is typically not total failure, but progressive loss of precision that manifests as eroding user trust over time.

An Additive Migration Path

A common misreading of the CRUD-to-cognition transition is that it requires wholesale replacement of existing relational infrastructure. This paper does not advance that argument.

A PostgreSQL database — properly normalized, with enforced foreign keys, correct indexes, and a clean schema — is not the obstacle to AI readiness. It is the foundation. The CRUD layer is not wrong; it is incomplete. The evolution from CRUD to cognition is additive, not replacive.

pgvector to it, or stand up a separate vector store (Pinecone, Qdrant) for semantic search. Don't replace — augment.CREATE TABLE user_events (id, user_id, event_type, payload JSONB, occurred_at). Make it append-only. This single table gives your AI a sense of history that no amount of ML can reconstruct from mutable rows.embedding_content_hash TEXT lets you detect when source data has changed and embeddings have gone stale — preventing your AI from answering based on outdated semantic indexes.agent_memories (agent_id, user_id, summary, context_type, relevance_score, expires_at). This is the difference between an AI that forgets everything after each conversation and one that actually learns your users.None of these steps require a large-scale migration. The highest-impact additions can be implemented incrementally — one table, one index, one pipeline stage at a time — with each addition unlocking cognitive capabilities that prompt engineering alone cannot produce.

The most consequential AI infrastructure improvements are frequently the simplest: a schema addition that has been deferred, an append-only event table that does not yet exist, a content hash column that enables stale embedding detection. The engineering debt limiting AI performance is rarely in the model. It is in a missing column.

What Comes Next

The shift from CRUD to cognition is not a transient trend in the technology adoption cycle. It is a permanent expansion of what data infrastructure must support — driven by a category of software (AI agents, LLM-powered applications, semantic retrieval systems) that did not exist in production form five years ago.

The organizations that will demonstrate durable competitive advantage over the next five years are not necessarily those with access to superior models. Foundation models are commoditizing at a rapid pace. The durable differentiator will be the depth, structure, and temporal completeness of the underlying data — organized in a form that a cognitive system can reason over effectively.

The database is no longer a passive record-keeper. It is the memory substrate of intelligent systems — and memory, the capacity to accumulate, connect, and recall context over time, is the operational basis of intelligence itself.

Codd's relational model defined what computers could do with discrete facts. The current transition asks a more demanding question: what can they do with the meaning of those facts? The answer requires a different kind of database — not instead of the one we have, but layered above it, extending its reach from storage into comprehension.

Is Your Database Cognitively Ready?

ThunderScan analyzes your production schema for all five cognitive gaps — semantic layer absence, temporal blindness, memory architecture, relationship depth, and modality isolation — and produces a prioritized remediation roadmap.

Run a Free Cognitive ScanSources & Further Reading

- Codd, E.F. (1970). "A Relational Model of Data for Large Shared Data Banks." Communications of the ACM.

- Gartner. (2025). "Top Data & Analytics Trends for 2025." Gartner Research.

- Weaviate. (2025). "The Future Speaks in Vectors: Why AI-native infrastructure is critical for agentic AI."

- The New Stack. (2026). "Why AI Workloads Are Fueling a Move Back to Postgres."

- HackerNoon. (2026). "Database Evolution: From Traditional RDBMS to AI-Native and Quantum-Ready Systems."

- GibsonAI. (2025). "RAG vs Memory for AI Agents: What's the Difference?"

- The New Stack. (2026). "Memory for AI Agents: A New Paradigm of Context Engineering."

- Kleppmann, M. (2017). Designing Data-Intensive Applications. O'Reilly Media.