The Business Problem No Dashboard Solves

Modern enterprises operate on structured data (Database). Yet in many organizations, that data remains inaccessible to key decision-makers — whether a logistics company analyzing on-time delivery rates and route efficiency, a real estate brokerage evaluating housing sales performance by broker or agent, or a restaurant group identifying top-selling dishes and menu performance across locations.

The Hidden Tax on Every Data Team

Business Intelligence tools handle fixed dashboards well — but break down the moment someone needs a one-off join, a custom filter, or an exploratory slice. The fallback is exporting CSVs, filing data tickets, or waiting 2–3 days for a report. Data teams become report factories. Decisions wait.

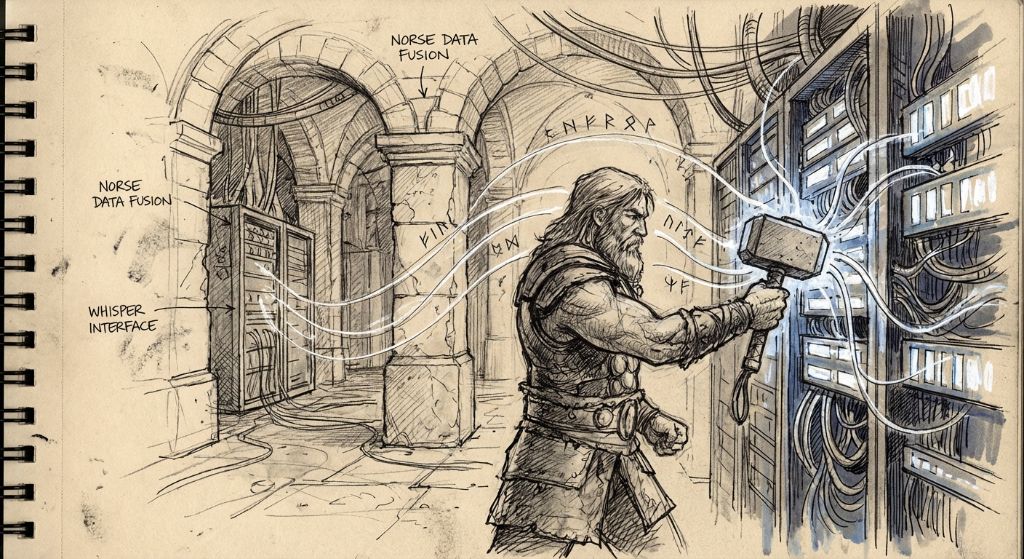

This is the problem my team at eSapiens set out to solve with the THOR Module — Transformer Heuristics for On-Demand Retrieval. We published the architecture and results as a research paper on arXiv (arXiv:2507.09592) in July 2025. This post translates the technical paper into a practical breakdown for software founders and technical leaders who want to understand what we built, how it works, and what it means for your product.

THOR converts plain-language questions into verified, read-only SQL analytics for enterprise databases — then goes further, generating human-readable narratives and handing raw data to a downstream visualization and forecasting engine. Non-technical users get answers in seconds, not days.

What Is THOR?

THOR (Transformer Heuristics for On-Demand Retrieval) is a Text-to-SQL engine — but calling it that undersells it. Most Text-to-SQL prototypes fail in production because they are single-step: they send a natural-language question to an LLM and hope the SQL comes out right. They don't handle schema ambiguity, fuzzy text matching, unit conversion, future-dated data, or execution errors. They certainly don't enforce compliance guardrails.

What Makes THOR Different

THOR is a closed-loop, multi-agent system. It separates orchestration from execution, wraps generation in a self-correction loop, enforces read-only compliance by construction, and produces human-readable insights — not just raw SQL output. It was built to pass enterprise compliance review, not just academic benchmarks.

The full technical specification, architecture diagrams, and Appendix with natural-language prompts and generated SQL are available in the original paper: THOR: Transformer Heuristics for On-Demand Retrieval (Shi, Li, Liu, Wang, He, Yang, Shi — eSapiens, arXiv 2025).

How It Works: A 5-Step Pipeline

THOR uses a decoupled orchestration/execution architecture — the orchestration layer decides what to do, and the execution layer does it. This separation is what makes the system composable, debuggable, and safe. Here is the complete pipeline:

Task Analysis & Routing — Supervisor Agent

A Supervisor Agent receives the natural-language question, determines whether it requires structured database querying (versus a conversational answer), and routes it to the SQL generation lane. This prevents the system from wasting LLM cycles on questions that don't need SQL.

Schema Injection — Dynamic Context Retrieval

The most underrated step. THOR dynamically fetches the relevant table names, column names, and field semantics for the user's question and injects them into the LLM prompt at query time. This is what allows the system to work across arbitrary enterprise schemas — no hardcoded table knowledge required.

SQL Generation — GPT-4o, Read-Only Enforced

The SQL Generation Agent constructs a single SELECT statement using the injected schema context. By design, it only emits SELECT — never UPDATE, DELETE, or INSERT. This read-only guardrail is enforced architecturally, not by prompt instruction alone.

Self-Correction & Rating Loop

If the query returns an empty result, an execution error, or a low quality score, the Self-Correction Module fires automatically. It uses LLM feedback to diagnose the failure and regenerates the SQL — up to five times — before gracefully failing. This is the component that turns a "sometimes works" prototype into a production-grade engine.

Result Interpretation & Insight Delivery

Once a valid result is obtained, a Result Interpretation Agent converts the raw tabular data into a concise, human-readable narrative — key values, trends, and actionable highlights. The raw rows are simultaneously handed to the downstream Insight & Intelligence engine for charts, forecasts, or exports.

The Four Specialized Agents

Supervisor Agent

Routes incoming queries. Decides if structured SQL is needed or if the question can be answered conversationally. Acts as the traffic controller for the entire pipeline.

SQL Generation Agent

Converts natural language + schema context into a single verified SELECT statement. Uses GPT-4o with carefully engineered schema-aware prompts. Handles fuzzy matching, unit conversions, and date semantics.

Result Interpretation Agent

Turns raw query results into plain-English insights. Extracts key values, identifies trends, and writes a short narrative that non-technical users can act on immediately without parsing a table.

Self-Correction Module

Monitors every query execution for empty results, syntax errors, or low quality scores. Uses LLM feedback to diagnose the root cause, patches the SQL, and retries — up to five times. The bounded retry prevents infinite loops while maximizing resolution rate.

The Self-Correction Engine: Why It Matters

The self-correction loop is the single most important engineering decision in THOR. Every Text-to-SQL system generates wrong SQL sometimes. The question is: what happens next?

In most prototypes, the answer is: nothing. The user sees an error or an empty table and gives up. In THOR, when a failure is detected — whether it is a syntax error, an empty result set, or a quality score below threshold — the system automatically:

- Feeds the error back into the LLM with the original question, the failed SQL, and the error message as context

- Asks the model to diagnose the failure and propose a corrected query

- Re-executes the patched SQL against the live database

- Repeats up to five times — then gracefully fails with a clear explanation rather than looping indefinitely

What the Paper Demonstrated

Across all seven test prompts spanning logistics and retail schemas, the self-correction loop resolved failures that baseline agents could not recover from. Common failure modes it handled: ambiguous column names, schema mismatches, unit conversion errors (meters vs. miles), and future-dated data that needed relative date anchoring.

Semantic Guardrails Inside the Generation Step

Beyond retry logic, THOR embeds semantic heuristics directly into the SQL generation step. These include:

- Unit awareness — automatically converts distance fields from meters to miles when the question uses miles

- Fuzzy text matching — uses

LIKE '%hip-hop%' OR LIKE '%hip hop%' OR LIKE '%hiphop%'rather than exact string equality - Date anchoring — correctly handles "past 3 months" against datasets that contain future-dated rows

- NULL safety — uses

COALESCEand defensiveCASEbranches to prevent division-by-zero and null propagation

Enterprise Safety & Compliance: Built In, Not Bolted On

This is the part most AI demos skip. Letting an LLM touch a production database introduces two hard risks that will block any enterprise deal:

Write Risk

A hallucinated UPDATE or DELETE statement could corrupt or destroy live production data. No enterprise security team will approve a system where this is possible.

Compliance Risk

Query results may expose rows or columns shielded by row-level or column-level security policies. Without fine-grained auditing, NL-to-SQL systems fail compliance review — before they ever reach production.

THOR addresses both through architectural design, not just prompt engineering:

Read-Only Guardrail

The SQL Generation Agent emits only single-statement SELECT queries. This is enforced at the architecture level — the execution layer will reject any statement that is not a read-only query, regardless of what the LLM generated. Write operations are impossible by construction.

Column-Level Access Control

The schema injection step only injects metadata for tables and columns the requesting user is authorized to access. If a column is restricted, it never enters the prompt — so the model cannot reference it. The system correctly refuses unauthorized column requests, demonstrating data governance compliance in the paper's smoke tests.

Audit Logging

Every query — including the original natural-language question, the generated SQL, execution results, and any self-correction attempts — is logged. This provides a full audit trail for compliance review, security investigation, and model improvement.

Real-World Results: Logistics Case Study

The paper documents a logistics operational oversight deployment where THOR was connected to a live logistics platform spanning Warehouse Management (WMS), Transportation Management (TMS), and ERP data. The results were significant:

| Task | Before THOR | With THOR | Time Saved |

|---|---|---|---|

| Daily shipment status report | 2–3 hours manual SQL + formatting | Automated, runs on schedule | ~90% |

| Ad-hoc delay root cause query | Data team ticket, 1–2 days wait | Natural-language query, seconds | ~99% |

| Cross-system KPI join (WMS + TMS) | Custom report request, 3–5 days | Unified searchable workspace | ~95% |

| Periodic exception alert | Manual check, reactive | Scheduled alert, proactive | Continuous |

Key Outcome

Teams shifted from reactive to proactive operations. Front-line managers could ask questions like "What are the top 3 reasons for shipment delays last week?" and receive a verified, chart-ready answer in seconds — without filing a ticket or writing a single line of SQL.

SQL Quality Comparison: Where Baselines Fall Short

The paper includes a direct comparison of THOR against three anonymized baseline Text-to-SQL products across seven real-world prompts. The failure patterns were consistent and instructive. Here are three representative examples from the Appendix:

Prompt 3 Unit Conversion Awareness

"Top 10 regions by income per mile over past 3 months. Note: distance field is in meters."

Baseline (Product Y) — silently wrong:

-- Divides by meters directly — gives income per meter, not per mile

SUM(fee_total_calculated) / SUM(distance) AS income_per_mile

THOR — correctly converts units + handles zero-distance edge case:

CASE

WHEN SUM(dr.distance / 1609.34) = 0 THEN 0

ELSE SUM(dr.fee_total_calculated / 1000) /

SUM(dr.distance / 1609.34)

END AS revenue_per_mile

Prompt 5 Fuzzy Text Matching

"How many hip-hop tracks are there in the database?"

Baseline (Product Z) — misses variant spellings:

WHERE LOWER(genre) = 'hip hop'

-- Returns 0 if stored as "Hip-Hop" or "HipHop"

THOR — covers all three common spellings:

WHERE LOWER(genre) LIKE '%hiphop%'

OR LOWER(genre) LIKE '%hip hop%'

OR LOWER(genre) LIKE '%hip-hop%'

Prompt 6 Date Anchoring with Future Data

"Provide the sales data for the past three months." Note: the dataset contains future-dated rows.

Both baseline products used standard relative date filters without an upper bound — causing them to include future invoice rows in "past 3 months" results. THOR generated an explicit date range with a BETWEEN clause anchored to the actual current date, excluding future-dated rows correctly.

The full SQL listings for all seven prompts are published in Appendix A of the paper at arXiv:2507.09592.

Known Limitations — We're Being Honest

The paper documents three current limitations that are important to understand if you are evaluating THOR for production deployment:

Complex Relational Logic

Performance on queries requiring multiple nested subqueries or advanced window functions has not been fully validated. For highly complex analytical queries, human review of generated SQL is still advisable.

Large-Scale Schema Scalability

Dynamic schema injection faces LLM context window limits at enterprise data warehouses with hundreds or thousands of tables. Current architecture works well for departmental databases and mid-scale warehouses — very large schemas require additional filtering before injection.

Domain-Specific Jargon

Without a dedicated semantic layer, the system relies on the LLM's general knowledge. Industry-specific terminology, proprietary metric names, or implicit business rules (e.g., "active customer" means different things in different companies) may produce logically valid but contextually wrong results.

What's Coming Next

The paper outlines three specific engineering workstreams on the near-term roadmap, each directly targeting one of the current limitations:

Domain Schema Enrichment & Synonym Mapping

A lightweight dictionary linking business terms, acronyms, and metric names to their underlying tables and columns. Injected into the prompt, this helps the model choose the correct field even when users ask with informal or company-specific phrasing — addressing the jargon problem directly.

Graph-Aware Join Planner

An internal graph of foreign key relationships and common join paths. Before generating SQL, the system will propose valid join templates — reducing accidental Cartesian products and missing link errors that are a common failure mode in multi-table queries.

Live Execution Feedback Loop & Nightly Fine-Tuning

Real-world database error messages and result sanity checks will feed into a nightly fine-tuning script and a growing regression suite. The model will learn from production missteps over time — making THOR a self-improving engine rather than a static deployment.

The Founder Takeaway

If you are building a B2B SaaS product that touches enterprise databases, there are three architectural lessons from this work that apply directly to your product decisions:

Try It Yourself

The THOR module powers the data conversation layer inside eSapiens. You can explore the platform at esapiens.ai, and scan your own database's structural health with Thor ThunderScan — which uses the same schema analysis engine to identify structural risks, performance gaps, and AI-readiness scores across your production database.

Sources & Further Reading

- Shi, I., Li, Z., Liu, F., Wang, W., He, L., Yang, Y., & Shi, T. (eSapiens, 2025). THOR: Transformer Heuristics for On-Demand Retrieval — An LLM Solution Enabling Conversation with Relational Databases. arXiv:2507.09592 [cs.DB]

- Zhong, V., Xiong, C., & Socher, R. (2017). Seq2SQL: Generating Structured Queries from Natural Language using Reinforcement Learning. arXiv:1709.00103

- Xu, X., Liu, C., & Song, D. (2017). SQLNet: Generating Structured Queries without Reinforcement Learning. arXiv:1711.04436

- Yu, T. et al. (2018). Spider: A Large-Scale Human-Labeled Dataset for Complex and Cross-Domain Semantic Parsing and Text-to-SQL Task. EMNLP 2018

- Wang, B. et al. (2020). RAT-SQL: Relation-Aware Schema Encoding and Linking for Text-to-SQL Parsers. ACL 2020

- Scholak, T., Schucher, N., & Bahdanau, D. (2021). PICARD: Parsing Incrementally for Constrained Auto-Regressive Decoding from Language Models. arXiv:2109.05093

- Gao, D. et al. (2023). DAIL-SQL: Efficient In-Context Learning for Text-to-SQL with Data Administration Instructions and Augmented Language. arXiv:2308.05389

- Aiweiya, N. & Li, J. (2024). LLM-Enhanced Text-to-SQL: A Survey of Prompt Design, Schema Retrieval, and Agent Pipelines. arXiv:2401.03714